iLRN 2022: Simulated Gaze Tracking using Computer Vision for Virtual Reality

Kai is an undergraduate student at Tokyo Tech's GSEP program who volunteered to do research at the Cross Lab. I served as his mentor on his first research project at the lab, which he presented at the iLRN conference as a short paper. The presentation was done on June 1, 2022 1am Tokyo time, so the schedule was quite tough.

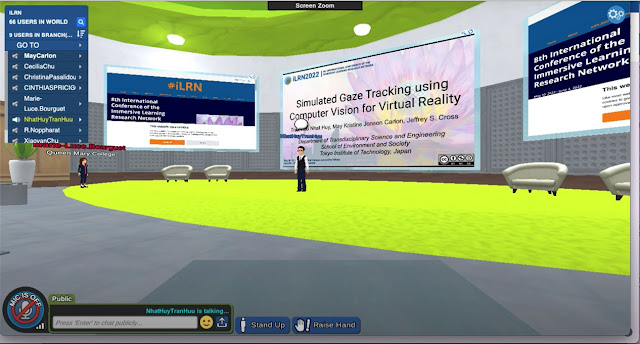

Kai presenting his work at the virtual campus

For a copy of relevant materials (e.g., presentation, paper) or any questions you may have, please feel free to reach out to me through the Contact Me gadget on this blog's sidebar.

Details

Title: Simulated Gaze Tracking using Computer Vision for Virtual Reality

Authors: Tran Huu Nhat Huy, May Kristine Jonson Carlon and Jeffrey S. Cross

Specifics: May 30 to June 4, 2022, pp 373-376, Vienna, Austria (in person) and online in iLRN's Virtual Campus powered by Virbela

Abstract

As Virtual Reality (VR) is becoming more viable for creating immersive learning environments, a promising area of study is in monitoring and assessing learner's engagement. Several related works in this area have used gaze tracking. Unfortunately, gaze tracking in VR is currently limited by hardware availability. This paper presents an approach for engagement monitoring that does not require the traditional costs of a more sophisticated hardware setup. A simple combination of video processing techniques was used to show which contents capture the user's attention, similar to the information current gaze trackers provide. However, success with this method was only evident with a sample video; refinements are still needed to cater to unpredictable changes in the field of view. After fine-tuning the process, this approach may potentially benefit instructional VR content developers in determining which content grabs their learners' attention effectively.

Comments

Post a Comment